The Grant Application Is Dead. What Comes Next?

How federated protocols, local agents, and organisational self-sovereignty could replace the broken funding model. Come down the rabbit hole with me...

A foundation puts out a call. Organisations write applications. A panel reads them, scores them, funds some, rejects most. This process has been the backbone of grant-making for decades. It was designed for a world of information scarcity — where funders needed a structured mechanism to surface what organisations do, what they need, and whether they're credible.

That world is gone.

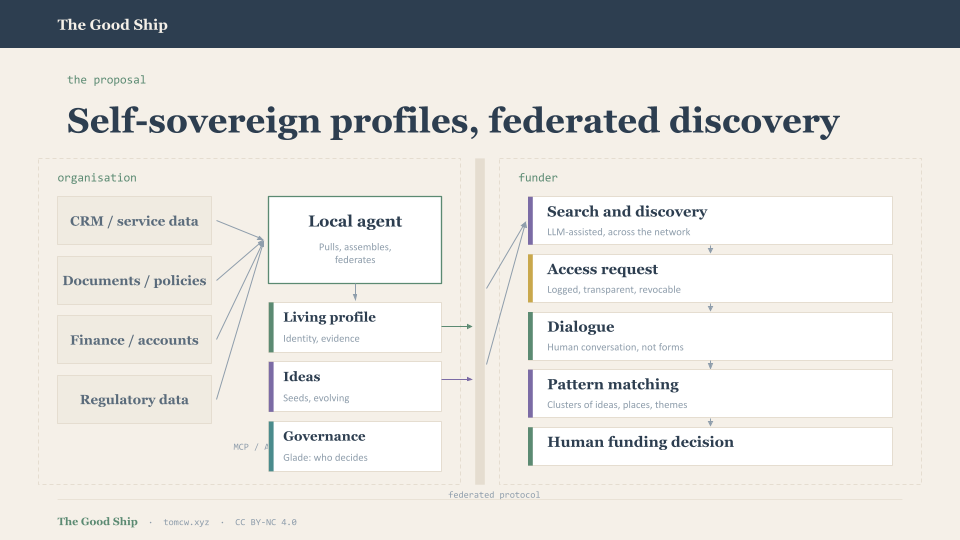

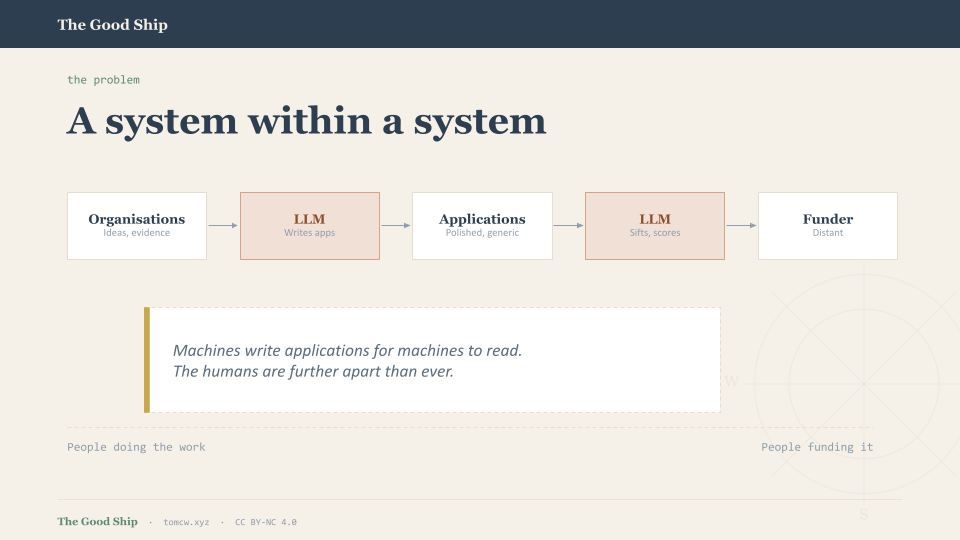

Large language models have made it ridiculously easy to produce polished sounding grant applications. Funders are now drowning in submissions many of them 'well-written', 'well-structured', and indistinguishable from one another, a soup of pretty good. The response has been predictable: use LLMs on the other side too, to sift and score. We're building a system within a system, machines writing applications for machines to read, and the human decisions that funding is supposed to enable are getting further away, not closer.

This isn't a problem we can optimise our way out of. The application form was an approach and technology for filtering in a low-bandwidth information environment, where the capacity to do the thing meant you only did the application if it you were really committed to it, or at least reasonably committed. That environment no longer exists. We need to think about what comes after.

The problem isn't volume

It's tempting to frame this as a scale problem - too many applications, not enough capacity. But in my opinion it's a deeper issue that is more structural. The traditional model is built on information asymmetry as a filtering mechanism. The form exists to make applicants prove they're worthy. LLMs broke that filter by making proof cheap.

The application as form approach jumbles several things that should be separate: identity (who is this organisation?), evidence (what have they done and learned?), intent (what do they want to do next?), and fit (does this align with what we fund?).

Bundling all of these into a single, pass/fail, one-shot document written to a deadline, shaped by word counts, and performed for an audience of assessors. Of course there are some who debundle these, into EOI's and multi stage approaches, but that was a solution to when filling in a form was a capacity issue.

Is there another way?

What if, instead of organisations applying to funders, the relationship ran the other way?

There's a school of thought that says we should just do away with applications entirely and become fully relational in grant-making. No forms, no calls, no competitive rounds. Just relationships. Funders get to know organisations over time, understand their work, build trust, and fund on the basis of that relationship. Approaches like Farming the Future and Regenerative Futures have moved in this direction, more connected, more trust-based, more human.

There's a lot to like about this. It removes the performative nature of the application form and centers the relationship. It lets funders understand context in a way that no written submission ever captures.

But can every foundation take this approach? And what are the potential risks if they do? Fully relational funding, without any balancing infrastructure, risks reverting to the boys' club. Who gets the relationships? Who gets invited into the room? Predominantly, it's those with existing access to networks and that access is overwhelmingly a function of privilege. Geography, education, professional background, confidence in certain social settings. The organisations doing the most important work in the most underserved communities are often the furthest from funder networks. A purely relational model doesn't just fail to fix this. It can make it worse.

So the choice maybe isn't "applications or relationships?" but perhaps it's "how do we build infrastructure that enables relationships to form on more equitable terms?"

That's what leads to the idea of a self-sovereign organisational profile. Imagine a world where each organisation maintains a living, structured, machine-readable representation of itself. Not one written for any specific funder or call, but maintained for its own purposes. It covers the things funders always want to know: what the organisation does, who it serves, how it's governed, its financial health, its evidence of impact, what it's learning, its culture and ways of working. It's updated continuously, fed by the organisation's own systems, not composed under deadline pressure.

Funders, in this model, become discoverers rather than gatekeepers. They search across a landscape of organisations, exploring profiles, understanding context, and reaching out when they see alignment. The application form disappears and in its place: a structured, ongoing, mutual relationship between resource and purpose. The infrastructure makes organisations discoverable regardless of whether they already have a relationship with the funder.

This isn't entirely new thinking. Elements of it exist in open data initiatives, in the 360Giving standard, in community foundation practice. But the enabling technologies of federated protocols, structured data standards, LLM-assisted search and sense-making are now mature enough to make the full vision practical.

An organisational knowledge layer

The core idea is a self-sovereign organisational profile: owned by the organisation, hosted on their terms, and selectively shared with funders and others who request access.

Think of it as an org.txt for the social sector but richer, living, and federated. Part narrative, part structured data. Markdown for the human-readable story. JSON or YAML for the machine-readable structure. Together, they form a profile that can be read by a person, queried by software, and understood by an LLM.

The profile covers several domains:

Identity — who the organisation is, its legal form, registration details, geography, scale, mission statement, and values. The stable facts that change slowly.

Evidence — what the organisation has done and what it has learned. Service delivery data, outcomes, case studies, evaluations. This is longitudinal, accumulating over time, telling a story about trajectory rather than presenting a snapshot.

Governance — how decisions are made, who's involved, what policies are in place. Board composition, safeguarding, financial controls. The things that signal organisational health and due diligence.

Culture — how the organisation works, what it values, how it treats people. This is harder to structure but matters enormously. It might include approaches to participation, equity commitments, learning practices.

Ideas — and this is where it gets interesting.

The ideas layer

Right now, the grant model assumes organisations are reactive, sitting there waiting to be asked and then respond within the constraints of a funding call. But organisations are always thinking about what they'd do with resource. They are the ones closest to communities, and have ideas sitting in people's heads, in strategy documents, in board away-day notes, in conversations with people. These ideas are often invisible to funders until someone writes them into an application.

What if ideas were first-class objects in the system?

An organisation publishes an idea — structured, tagged, place or theme-based, loosely costed, linked to their evidence and track record. Not a full proposal, but a more like a seed. It sits there, discoverable, evolving. The organisation can refine it over time, link it to emerging evidence, connect it to ideas from other organisations working in the same place or theme.

Funders browse the landscape of ideas, not applications. They see clusters of intent forming , six organisations across two places all circling similar work. They can signal interest, which is itself visible and logged. They might approach a cluster and say: "We'd fund this. Let's talk." Or they might see an idea that's been developing for two years, gathering evidence and collaborators, and recognise that it's ready.

This changes the temporality of funding fundamentally. Instead of artificial deadlines and competitive rounds, funding becomes responsive to organisational readiness and ecosystem dynamics, shifting power.

This is sort of something I've been exploring through OpenIdeas.uk , the concept that ideas should be visible, connectable, and persistent, not locked inside application forms that only one funder ever sees.

Federation: no platform, just a network

The worst version of this idea is a centralised platform, another portal where organisations create profiles, controlled by whoever runs it. Stop me if you've heard this story before. It creates new gatekeepers, new lock-in, new dependencies.

The better version is federated. Each organisation hosting its own profile or has it hosted on their behalf, and the profiles communicate via an open protocol. No one owns the index. No single entity decides who's visible. The organisation is its own node in the network.

Two protocols are serious candidates: AT Protocol (the technology behind Bluesky) and ActivityPub (the W3C standard behind Mastodon and the wider fediverse).

AT Protocol

AT Protocol's strongest feature for this use case is account portability. An organisation's identity based on a decentralised identifier (DID) travels with them. If a hosting provider disappears or becomes problematic, the organisation takes their data and moves. For organisations that have experienced platform lock-in, this is compelling. The DID also provides cryptographic verification of identity, which matters in a funding context where trust is essential.

The lexicon system is also relevant. AT Protocol lets you define custom record types meaning you could define an idea, an evidence-summary, an access-grant as formal lexicons, and they become first-class objects in the network. The firehose architecture means funders could subscribe to a real-time stream of updates across the network as new ideas are published, evidence updated, profiles changed. The flow of information becomes continuous rather than periodic.

But there are limitations too. AT Protocol is still heavily shaped by Bluesky's social media needs. The infrastructure is resource-intensive to self-host which reintroduces some centralisation at the infrastructure layer and most critically for our purposes, AT Protocol assumes a public-by-default model. The tiered access control we might need, of public summaries, detailed profiles visible only to approved funders, full evidence bases available on request would need to be built as an additional layer rather than being native to the protocol.

ActivityPub

ActivityPub brings maturity and ecosystem breadth. The actor model maps naturally to organisations with each organisation being an actor that publishes activities: "new idea published," "evidence updated," "access granted" to followers. Funders follow organisations. ActivityPub handles private and semi-private content more naturally. You can address activities to specific actors or collections, which maps well to tiered access. The inbox/outbox model provides a built-in audit trail of interactions.

The downsides: identity is server-bound, if an organisation's server goes down, their identity goes with it. No native portability.

A pragmatic decision: protocol-agnostic, with a bias

Neither protocol is perfect as-is. AT Protocol offers better identity, portability, and structured data. ActivityPub offers better access control, maturity, and the pub/sub model we need.

So maybe the best path is to design the data model and vocabulary first, protocol-agnostic. Start with the data model. Get it right. Then implement federation beneath it.

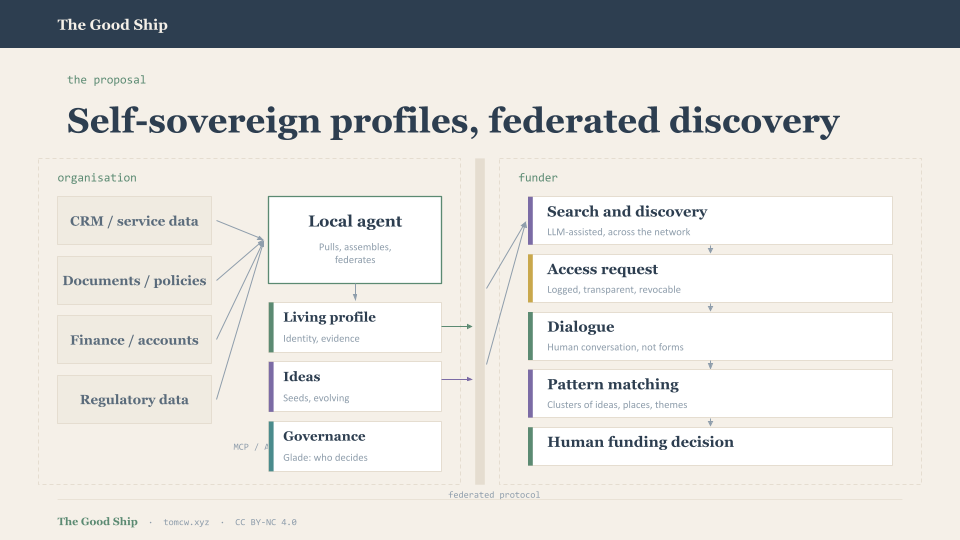

The central artefact isn't a platform or a protocol but a local agent running on or for each organisation.

This is the organisation's node in the network. It pulls, it assembles, it federates. It does the work so people don't have to.

- Pulls evidence from existing systems — CRM data, service delivery records, financial information, impact metrics. Organisations don't maintain yet another system; the agent connects to what they already use via APIs, MCP servers, database connections, and document indexing.

- Maintains the living profile — assembling structured data and narrative into the canonical representation. An LLM assists with summarisation, structuring, and keeping things current, but the organisation retains editorial control.

- Manages ideas — providing a space to draft, refine, tag, and publish ideas, linked to evidence and to ideas from other organisations.

- Handles access control — processing requests from funders, logging who sees what, managing tiered permissions. The organisation decides. The agent enforces.

- Speaks to the protocol — federating the profile outward via ActivityPub, AT Protocol, or both. The agent is where the protocol-agnostic data model meets the specific federation mechanism.

For some organisations, the agent runs on their own infrastructure. For others, it's a managed service hosted on their behalf but with their data remaining sovereign. The key is that the organisation owns the agent's output regardless of where it runs.

This architecture also means the agent can grow incrementally. An organisation might start with just a static profile generated from their website and Companies House data (something like what llmstxt.social already does). Over time, they connect their CRM, start publishing ideas, grant access to funders. Start small and build it up.

What this enables

For organisations: maintain your own truth, once, and share it on your terms. Stop rewriting the same information for every funder. Make your ideas visible before anyone asks. See who's looking at your work. Connect your ideas to others doing complementary work.

For funders: discover organisations doing relevant work, rather than waiting for them to find your call. See patterns and clusters across places and themes. Fund into ecosystems, not isolated bids.

For the system: Make smaller organisations with strong practice more visible, not less. Create mutual transparency about who holds power and how it's exercised. Build a shared evidence layer that benefits everyone.

Some caution obviously

This isn't without hard problems. Some of them:

Capacity. Even with low-friction tooling, some organisations won't be able to maintain a profile. The system needs to account for this

Equity by design, not afterthought. If the system makes it easier for well-resourced organisations to maintain rich, polished profiles while smaller organisations struggle to keep theirs current, we've just moved the privilege barrier from "who writes the best application" to "who maintains the best profile."

Governance of the standard. Who maintains the schema? How does it evolve? This needs to be stewarded by a body that represents both organisations and funders, and ideally by people who understand open standards development.

Preventing surveillance. If funders can see everything, this could become a new panopticon. The access control layer and audit trail are essential, not optional. Organisations must be able to see exactly who has looked at what, and revoke access. The power relationship must be genuinely mutual.

Interoperability with existing systems. The CRM landscape alone is fragmented Salesforce, Lamplight, Charitylog, Airtable, spreadsheets. The local agent needs robust MCP integrations for all of these.

Funder adoption. Funders would need to move from designing calls and reading applications to searching, discovering, and entering dialogue. The system probably needs to coexist with traditional grant-making for a long transition period.

Building on what exists

This isn't starting from zero. But it's worth being honest about the limitations of what already exists, because they point toward what needs to be different.

Open Referral UK and HSDS provide a standard for describing services what's available, where, for whom. But Open Referral is defined by services, not by organisations and their ideas. It assumes things are relatively static: a service exists, it has a location and eligibility criteria, you can look it up. That's useful for directories, but that's not how organisations or ideas are in reality.

360Giving has demonstrated that open grant data is possible and valuable. But it describes funding after the fact who gave what to whom. It doesn't address the question of how resource finds purpose in the first place.

The Charity Commission is an interesting case. They're increasingly requiring (or planning to) organisations to report on "impact" whatever that means (this could be its own post entirely, because the gap between what regulators mean by impact and what organisations experience as meaningful change is vast). But the direction of travel is clear: more structured reporting, more data, more accountability. The use case driving this is regulatory. Not necessarily improving anything for organisations or the people they work with.

And so, if things are heading this way anyway shouldn't we try to do it better? Shouldn't we build the infrastructure so that the same structured data that satisfies a regulatory requirement also makes an organisation discoverable to funders, also connects their ideas to potential collaborators, also tells a richer story than a tick-box compliance exercise?

What comes next

This is an early exploration, not a finished concept, the writing sometimes is the thinking. If it were left to me maybe I be thinking about the following: define the schema properly, with input from organisations and funders. Build a reference agent, starting simple, with a static profile and manual updates, adding system integrations over time. Then test it with real relationships: a willing funder, a small cohort of organisations, a genuine alternative to a funding round. See what breaks.

Or, we can just keep going round and round ever chasing efficiencies and optimising a broken system.